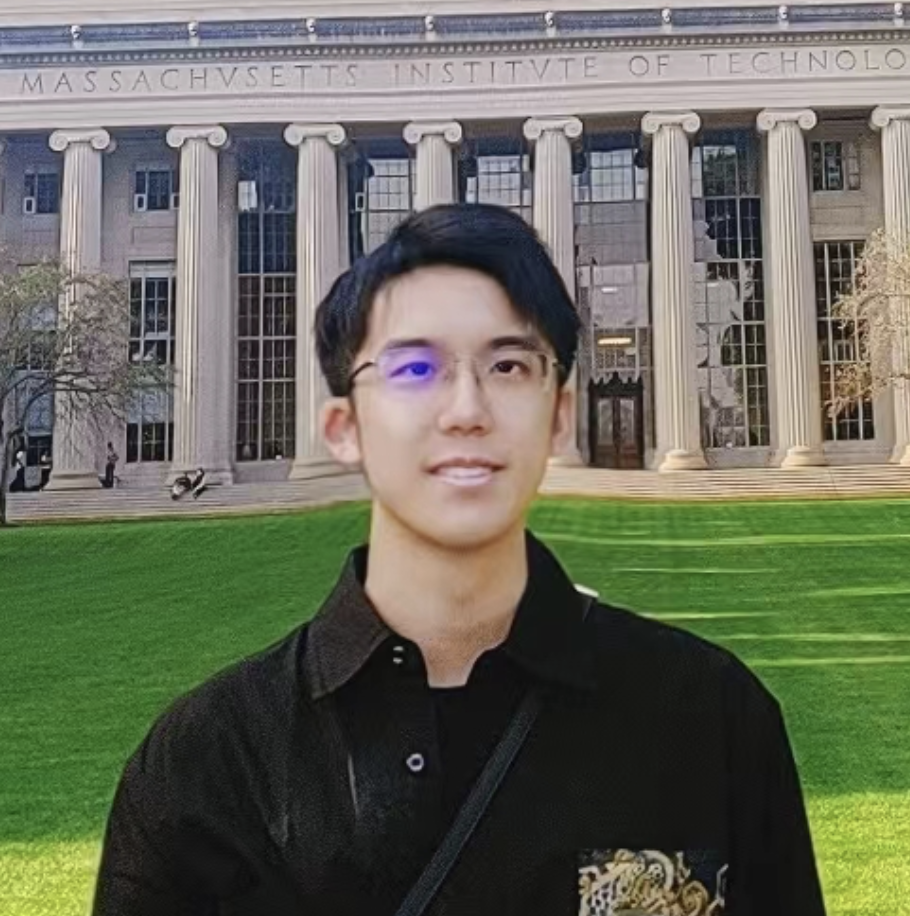

About me

Hi, I’m Yilun Xu, an research scientist at Google DeepMind, working on generative models that simulate the world 🌎. Previously, I co-led the diffusion distillation efforts at NVIDIA.

I received my PhD in MIT EECS, advised by Tommi Jaakkola. I obtained my Bachelor’s degree from Turing Class in EECS dept, Peking University, working with Yizhou Wang (PKU), and Stefano Ermon (Stanford). During my NVIDIA time, I worked with Weili Nie, Arash Vahdat and Ming-Yu Liu.

My current main research focus is deep generative modeling:

- (i) New models: PFGM [10], PFGM++ [13], HGF [18], t-EDM [21]

- (ii) Training: STF [11], Disco-Diff [16], Style Control [9]

- (iii) Sampling: Restart sampling [14], Particle Guidance [15], Anytime AR [5]

- (iv) Discrete diffusion: DDPD [19], EDLM [20]

- (v) Diffusion Distillation: TCM [22], f-distill [23]

I also have some works on bridging machine learning and information theory [4, 3, 2, 1], such as V-information.

Contact: ylxu@google.com

Publications

(*) denotes equal contribution

- COSMOS-NANO

Distilled version of COSMOS video foundation models. Featured in Jensen Huang's CES / GTC 2025 keynote.

NVIDIA - One-step Diffusion Models with f-Divergence Distribution Matching

Yilun Xu, Weili Nie, Arash Vahdat.

In preprint, coming soon

[PDF] ,[Project Page] - Truncated Consistency Models

Sangyun Lee, Yilun Xu, Tomas Geffner, Giulia Fanti, Karsten Kreis, Arash Vahdat, Weili Nie.

In International Conference on Learning Representations (ICLR), 2025

[PDF] ,[Code] ,[Project Page] - Heavy-Tailed Diffusion Models

Kushagra Pandey, Jaideep Pathak, Yilun Xu, Stephan Mandt, Michael Pritchard, Arash Vahdat, Morteza Mardani.

In International Conference on Learning Representations (ICLR), 2025

[PDF] - Energy-Based Diffusion Language Models for Text Generation

Minkai Xu, Tomas Geffner, Karsten Kreis, Weili Nie, Yilun Xu, Jure Leskovec, Stefano Ermon, Arash Vahdat.

In International Conference on Learning Representations (ICLR), 2025

[PDF] - Think While You Generate: Discrete Diffusion with Planned Denoising

Sulin Liu, Juno Nam, Andrew Campbell, Hannes Stärk, Yilun Xu, Tommi Jaakkola, Rafael Gómez-Bombarelli.

In International Conference on Learning Representations (ICLR), 2025

[PDF] - Hamiltonian Score Matching and Generative Flows

Peter Holderrieth, Yilun Xu, Tommi Jaakkola.

In Neural Information Processing Systems (NeurIPS), 2024.

[PDF] - On Physics-Inspired Generative Models

Yilun Xu.

PhD Thesis 🎓, Massachusetts Institute of Technology.

[PDF] - DisCo-Diff: Enhancing Continuous Diffusion Models with Discrete Latents

Yilun Xu, Gabriele Corso, Tommi Jaakkola, Arash Vahdat, Karsten Kreis.

In International Conference on Machine Learning (ICML), 2024.

[PDF] ,[Project Page]

- Particle Guidance: non-I.I.D. Diverse Sampling with Diffusion Models

Gabriele Corso, Yilun Xu, Valentin De Bortoli, Regina Barzilay, Tommi Jaakkola.

In International Conference on Learning Representations (ICLR), 2024; Deep Inverse Workshop, Neural Information Processing Systems (NeurIPS), 2023 (Oral); Workshop on Diffusion Models, Neural Information Processing Systems (NeurIPS), 2023.

[PDF] ,[Code]

Star - Restart Sampling for Improving Generative Processes

Yilun Xu*, Mingyang Deng*, Xiang Cheng*, Yonglong Tian, Ziming Liu, Tommi Jaakkola.

In Neural Information Processing Systems (NeurIPS), 2023.

[PDF] ,[Code]

News Coverage: [MarkTechPost],

Star - PFGM++: Unlocking the Potential of Physics-Inspired Generative Models

Yilun Xu, Ziming Liu, Yonglong Tian, Shangyuan Tong, Max Tegmark, Tommi Jaakkola.

In International Conference on Machine Learning (ICML), 2023.

[PDF] ,[Code] ,[Slide]

News Coverage: [MIT News], [Quanta Magazine], [Jiangmen Venture (CN)]

Star

- GenPhys: From Physical Processes to Generative Models

Ziming Liu, Di Luo, Yilun Xu, Tommi Jaakkola, Max Tegmark.

In preprint, 2023

[PDF]

News Coverage: [MIT News], [Quanta Magazine], - Stable Target Field for Reduced Variance Score Estimation in Diffusion Models

Yilun Xu*, Shangyuan Tong*, Tommi Jaakkola.

In International Conference on Learning Representations (ICLR), 2023

[PDF] ,[Code] ,[Slide] ,[Poster]

News Coverage: [MarkTechPost],

Star - Poisson Flow Generative Models

Yilun Xu*, Ziming Liu*, Max Tegmark, Tommi Jaakkola.

In Neural Information Processing Systems (NeurIPS), 2022. (Spotlight)

[PDF] ,[Code] ,[Slide] ,[Poster]

News Coverage: [MIT News], [Quanta Magazine], [AssemblyAI Blog], [MarkTechPost], [Synced (CN)], [PaperWeekly (CN)], [QbitAI (CN)],

Star - Controlling Directions Orthogonal to a Classifier

Yilun Xu, Hao He, Tianxiao Shen, Tommi Jaakkola.

In International Conference on Learning Representations (ICLR), 2022. (Spotlight)

[PDF] ,[Code] ,[Slide] ,[Poster]

Star - A Survey on Generative Diffusion Model

Hanqun Cao, Cheng Tan, Zhangyang Gao, Yilun Xu, Guangyong Chen, Pheng-Ann Heng, Stan Z. Li.

In IEEE Transactions on Knowledge and Data Engineering (TKDE), 2023.

[PDF] ,[Code]

Star - Learning Representations that Support Robust Transfer of Predictors

Yilun Xu, Tommi Jaakkola.

In preprint, 2022

[PDF] ,[Code] - Can Subnetwork Structure Be the Key to Out-of-Distribution Generalization?

Dinghuai Zhang, Kartik Ahuja, Yilun Xu, Yisen Wang, Aaron Courville.

In International Conference on Machine Learning (ICML), 2021. (Long talk)

[PDF] - Anytime Sampling for Autoregressive Models via Ordered Autoencoding

Yilun Xu, Yang Song, Sahaj Garg, Linyuan Gong, Rui Shu, Aditya Grover, Stefano Ermon.

In International Conference on Learning Representations (ICLR), 2021

[PDF] ,[Code] ,[Slide] ,[Poster]

Star - A Theory of Usable Information under Computational Constraints

Yilun Xu, Shengjia Zhao, Jiaming Song, Russell Stewart, Stefano Ermon.

In International Conference on Learning Representations (ICLR), 2020. (Oral)

[PDF] ,[Code] ,[Slide] ,[Video]

News Coverage: [Synced (CN)], - TCGM: An Information-Theoretic Framework for Semi-Supervised Multi-Modality Learning

Xinwei Sun*, Yilun Xu*, Peng Cao, Yuqing Kong, Lingjing Hu, Shanghang Zhang, Yizhou Wang.

In European Conference on Computer Vision (ECCV), 2020. (Oral)

[PDF] ,[Code] - L_{DMI} : A Novel Information-theoretic Loss Function for Training Deep Nets Robust to Label Noise

Yilun Xu*, Peng Cao*, Yuqing Kong, Yizhou Wang.

In Neural Information Processing Systems (NeurIPS), 2019

[PDF] ,[Code] ,[Slide]

News Coverage: [Synced (CN)], [PKU-CFCS News (CN)]

Star - Max-MIG: an Information Theoretic Approach for Joint Learning from Crowds

Peng Cao*, Yilun Xu*, Yuqing Kong, Yizhou Wang.

In International Conference on Learning Representations (ICLR), 2019

[PDF] ,[Code] ,[Slide]

News Coverage: [PKU-CFCS News (CN)]

Star

Education

Sep. 2021* - May. 2024: Massachusetts Institute of Technology

Ph.D. in Computer Science

Advisor: Tommi Jaakkola

*: remote during Sept 2020 - Aug 2021

Sep. 2016 - July. 2020: Peking University

B.S. in Turing Class, Computer Science (summa cum laude)

Advisor: Yizhou Wang

Service

Conference Reviewer: NeurIPS 2020-2024; ICLR 2021-2025; ICML 2021-2024; CVPR 2025; ICCV 2025

PC member: ICLR 2022 PAIR2Struct, CVPR 2025 Visual Generative Modeling: What’s After Diffusion?

Panelist: ICML 2023 Structured Probabilistic Inference & Generative Modeling

Teaching Assistant for 6.S052/6.S952: Modeling with Machine learning for CS, MIT, Spring 2023 (co-design the problem set and lectures for this new course, and receive an outstanding TA rating of 6.9 out of 7.0 from students)

Miscellaneous

I am a national second-class table tennis player in China. Men’s single champion in PKU Freshman’s Cup (2016). I also played for MIT table tennis team, winning the 3rd place (group) in the 2022 NCTTA Upper New England Championship.